Deep Dive: Knowledge Graph & Hybrid Search

Tổng quan Intro

Original LLM Wiki dùng index.md như primary navigation — một file catalog

listing mọi page. Approach này hoạt động tốt đến ~200 pages, nhưng sau đó index trở

nên quá dài để LLM đọc trong một pass.

Deep dive này cover hai layers được thêm vào khi wiki grow past 200 pages: Knowledge Graph (entity + typed relationships, thay thế flat wikilinks) và Hybrid Search (BM25 + vector + graph với RRF fusion, thay thế brute-force index.md reading).

Knowledge Graph Layer Graph

1. Entity Extraction — Structured Knowledge từ Text

1Entity types

| Entity Type | Ví dụ | Key Attributes |

|---|---|---|

| Concept | Attention Mechanism | definition, properties, variants |

| Person | Ashish Vaswani | role, affiliation, contributions |

| Project | BERT, GPT-3 | status, owner, tech stack |

| Library | PyTorch, TensorFlow | version, language, use case |

| Decision | Use Transformer over RNN | made_by, date, rationale, status |

| Event | GPT-4 release | date, impact, related entities |

Entity extraction prompt

Entity extraction during ingest

Before writing wiki pages, extract all entities from this source.

For each entity found, list:

- Name: (exact name, consistent with existing wiki)

- Type: Concept | Person | Project | Library | Decision | Event

- Key attributes: (3-5 most important facts)

- Relationships to other entities: (type: name)

Format:

```

Entity: Attention Mechanism

Type: Concept

Attributes:

- Scaled dot-product of Query, Key, Value vectors

- Computational complexity: O(n²) in sequence length

- Enables direct dependency between any two positions

Relationships:

- part_of: Transformer Architecture

- enables: Long-range dependency modeling

- variant_of: [none — this IS the base]

- used_by: BERT, GPT series

```

Wait for my confirmation before creating pages.2. Typed Relationships — Beyond Flat Wikilinks

2Relationship taxonomy

Relationship trong entity page

wiki/entities/bert.md

# BERT

**Type**: Project

**Confidence**: 0.90

## Relationships

- **depends_on**: [[transformer-architecture]] — uses encoder-only stack

- **uses**: [[masked-language-modeling]] — core pre-training objective

- **uses**: [[next-sentence-prediction]] — secondary pre-training task

- **evolved_from**: [[elmo]] — BERT replaced ELMo as SOTA on NLP benchmarks

- **superseded_by**: [[roberta]] — same arch, better training (no NSP)

- **enables**: [[transfer-learning-nlp]] — fine-tune on downstream tasks

- **contradicts**: [[gpt-architecture]] — bidirectional vs autoregressive

- **part_of**: [[pre-training-paradigm]] — foundational to modern LLMsƯu điểm

- Graph traversal queries: "what depends on X?" → walk dependency edges

- Contradiction chains: "what contradicts GPT-3?" → instant answers

- Impact analysis: "if we deprecate Redis?" → walk used_by edges downstream

- Timeline reconstruction: evolved_from/supersedes chains → automatic lineage

Nhược điểm

- Typed relationships cần đồng thuận trong team/schema về taxonomy

- LLM có thể assign wrong relationship types → cần review

- More verbose pages → slightly slower ingest

3. Graph Traversal Queries

3Graph traversal query patterns

| Query pattern | Traversal | Ví dụ |

|---|---|---|

| Impact analysis | Walk used_by + depends_on edges outward | "What breaks if Redis goes down?" |

| Lineage / provenance | Walk evolved_from + preceded_by backwards | "What came before Transformer?" |

| Contradiction chain | Walk contradicts edges | "What does the wiki disagree about?" |

| Dependency graph | Walk depends_on recursively | "What does BERT need to work?" |

| Discovery | Walk enables edges | "What does attention mechanism enable?" |

Graph query prompt

Graph traversal query

Use graph traversal to answer: "What would be impacted if we deprecated the attention mechanism?"

Start at entity: [[attention-mechanism]]

Walk outward via edges: used_by, depends_on, enables

For each entity encountered:

- Note it

- Walk its outward edges (max depth: 3)

Return a dependency tree showing all impacted entities.

Format as nested list with relationship type on each edge.Hybrid Search — Scale >200 Pages Search

4. BM25 — Keyword Search với Stemming

4BM25 formula

BM25 scoring (Okapi BM25)

import math

from collections import Counter

def bm25_score(query_terms: list, doc: str, corpus: list,

k1: float = 1.5, b: float = 0.75) -> float:

"""

BM25: term frequency với length normalization.

k1 = term frequency saturation (default 1.5)

b = length normalization (default 0.75)

"""

doc_terms = doc.lower().split()

doc_len = len(doc_terms)

avg_len = sum(len(d.split()) for d in corpus) / len(corpus)

tf = Counter(doc_terms)

N = len(corpus)

score = 0

for term in query_terms:

# Inverse document frequency

n_docs_with_term = sum(1 for d in corpus if term in d.lower())

idf = math.log((N - n_docs_with_term + 0.5) / (n_docs_with_term + 0.5) + 1)

# Term frequency with saturation + length normalization

tf_term = tf.get(term, 0)

tf_norm = (tf_term * (k1 + 1)) / (tf_term + k1 * (1 - b + b * doc_len / avg_len))

score += idf * tf_norm

return score

# Practical: use rank-bm25 or whoosh libraryBM25 cho LLM Wiki

Setup BM25 index cho wiki/

from rank_bm25 import BM25Okapi

import glob, os

def build_wiki_bm25_index(wiki_dir: str):

pages = []

for filepath in glob.glob(f"{wiki_dir}/**/*.md", recursive=True):

with open(filepath) as f:

content = f.read()

pages.append({

"path": filepath,

"content": content,

"tokens": content.lower().split()

})

tokenized_corpus = [p["tokens"] for p in pages]

bm25 = BM25Okapi(tokenized_corpus)

return bm25, pages

def search_bm25(query: str, bm25, pages, top_k=10):

query_tokens = query.lower().split()

scores = bm25.get_scores(query_tokens)

ranked = sorted(enumerate(scores), key=lambda x: x[1], reverse=True)

return [(pages[i]["path"], score) for i, score in ranked[:top_k] if score > 0]5. Vector Search — Semantic Similarity

5Embedding pipeline cho wiki

Vector index cho wiki pages

from sentence_transformers import SentenceTransformer

import numpy as np

import faiss

def build_wiki_vector_index(wiki_dir: str):

model = SentenceTransformer('all-mpnet-base-v2') # 768-dim, good quality/speed

pages = []

for filepath in glob.glob(f"{wiki_dir}/**/*.md", recursive=True):

with open(filepath) as f:

content = f.read()

# Chunk by section (H2 headers)

chunks = split_by_sections(content, max_tokens=512)

for chunk in chunks:

pages.append({"path": filepath, "content": chunk})

contents = [p["content"] for p in pages]

embeddings = model.encode(contents, show_progress_bar=True)

# FAISS index for fast similarity search

dim = embeddings.shape[1]

index = faiss.IndexFlatIP(dim) # Inner product (cosine after normalization)

faiss.normalize_L2(embeddings)

index.add(embeddings)

return index, pages, model

def search_vector(query: str, index, pages, model, top_k=10):

query_emb = model.encode([query])

faiss.normalize_L2(query_emb)

scores, indices = index.search(query_emb, top_k)

return [(pages[i]["path"], float(scores[0][j]))

for j, i in enumerate(indices[0]) if scores[0][j] > 0.3]| Model | Dims | Speed | Quality |

|---|---|---|---|

all-MiniLM-L6-v2 | 384 | ⚡ Fast | Good (personal wiki) |

all-mpnet-base-v2 | 768 | Medium | Better (recommended) |

text-embedding-3-small | 1536 | API call | Best (OpenAI API) |

nomic-embed-text | 768 | Local | Best local alternative |

6. RRF Fusion — Kết hợp Ba Streams

6RRF formula

Reciprocal Rank Fusion

from collections import defaultdict

def reciprocal_rank_fusion(result_lists: list[list[str]], k: int = 60) -> list[str]:

"""

RRF: fuse multiple ranked lists.

k = smoothing constant (default 60, from original paper)

Score(doc) = Σ 1 / (k + rank_in_list_i)

"""

scores = defaultdict(float)

for ranked_list in result_lists:

for rank, doc_id in enumerate(ranked_list, start=1):

scores[doc_id] += 1 / (k + rank)

# Sort by fused score descending

return sorted(scores, key=scores.get, reverse=True)

# Example usage:

bm25_results = ["doc_a", "doc_c", "doc_b", ...] # BM25 ranked

vector_results = ["doc_b", "doc_a", "doc_d", ...] # Vector ranked

graph_results = ["doc_e", "doc_a", "doc_c", ...] # Graph ranked

fused = reciprocal_rank_fusion([bm25_results, vector_results, graph_results])

# Returns: ["doc_a", "doc_c", "doc_b", "doc_e", "doc_d", ...]Full hybrid search pipeline

Ưu điểm

- RRF không cần weight tuning — rank-based, scale-invariant

- Each stream catches what others miss (complementary, not redundant)

- Graph stream adds structural reasoning không có trong keyword/semantic

- agentmemory: 95.2% LongMemEval-S với ba streams

Nhược điểm

- Three systems to maintain (BM25 index, vector index, graph)

- Graph traversal cost tăng với graph size

- Vector index cần rebuild khi wiki content changes

- Overkill cho wiki < 200 pages

7. Benchmarks — Hybrid vs Index.md vs RAG

7LongMemEval-S benchmark

| Approach | LongMemEval-S | Notes |

|---|---|---|

| RAG (vector only) | ~65-70% | Baseline, semantic only |

| BM25 only | ~60-65% | Good cho exact terms, poor semantic |

| BM25 + Vector | ~80-85% | Significant improvement |

| BM25 + Vector + Graph | 95.2% | agentmemory result (rohitg00) |

| index.md (LLM reads all) | ~70-75% | Good at small scale, degrades |

Scale Path & Tooling Tools

8. Scale Breakpoints — Khi nào cần gì

8Scale breakpoints

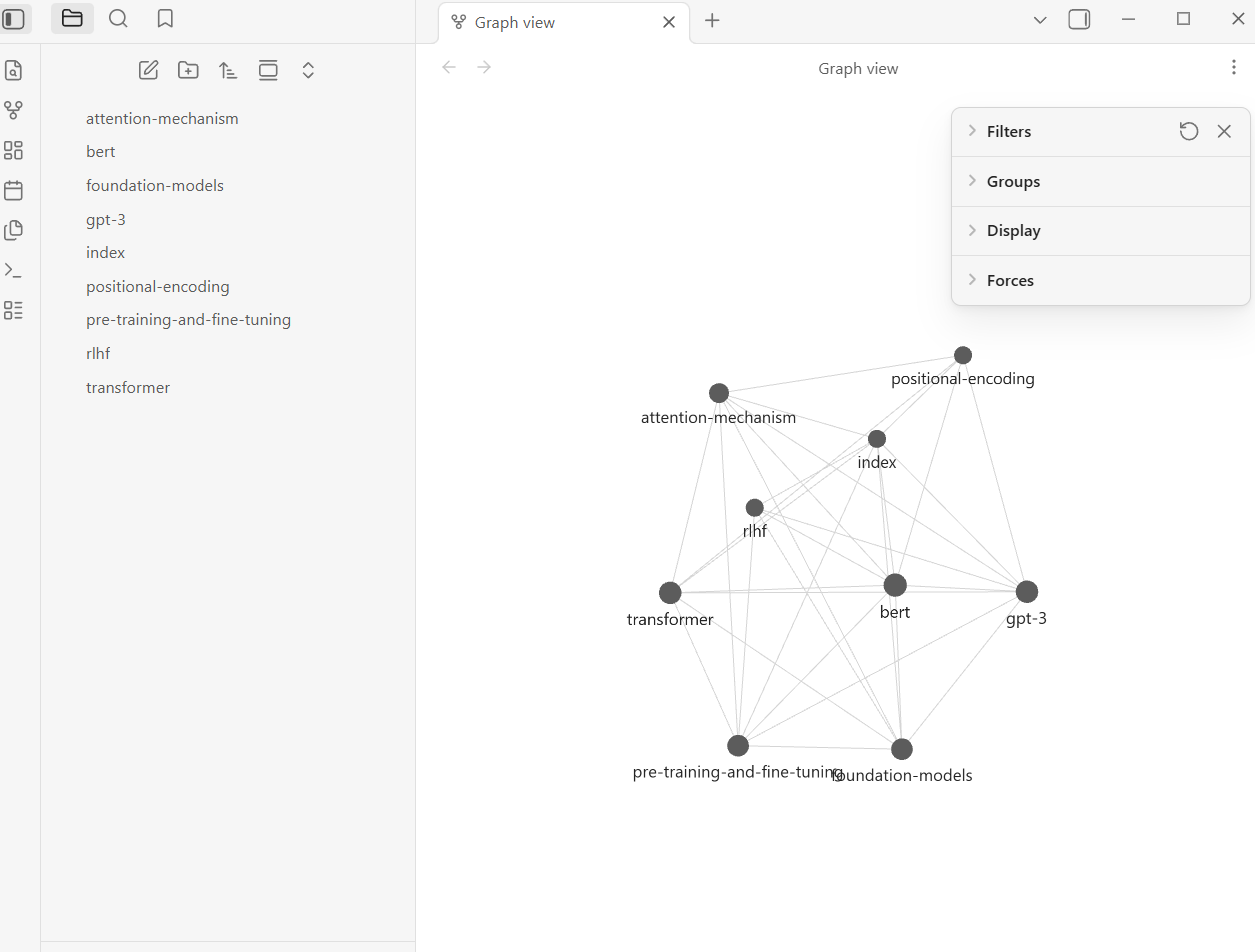

9. Obsidian — Visual Layer cho Knowledge Graph

9Obsidian workflow

Obsidian Web Clipper

Install Obsidian Web Clipper browser extension → converts bất kỳ

webpage nào thành markdown và save vào raw/. Bookmarking articles

trở thành ingest-ready nhanh như bookmark thông thường.

| Tool | Role trong LLM Wiki |

|---|---|

| Obsidian | Read/browse wiki, graph view, backlinks |

| Claude Code | LLM agent: ingest, query, lint |

| Web Clipper | Save web articles → raw/ as markdown |

| VS Code | Alternative editor, better cho large files |

| Git | Version control cho wiki/ (optional nhưng recommended) |

Tổng kết Wrap

Knowledge graph và hybrid search không phải bước đầu tiên của LLM Wiki — chúng là bước thứ ba và thứ năm trong scaling path. Bắt đầu với index.md. Add typed relationships khi bạn bắt đầu miss connections. Add BM25 khi index.md too long. Add vectors khi semantic queries fail. Add graph traversal khi structural queries fail.

agentmemory's 95.2% trên LongMemEval-S với BM25 + vector + graph là proof rằng approach này works ở production scale. Nhưng hầu hết wikis cá nhân sẽ không cần đến tầng đó.